Converting NVIDIA DeepStream Pipelines to Intel® Deep Learning Streamer (Intel® DL Streamer) Pipeline Framework#

This document describes the steps to convert a pipeline from NVIDIA DeepStream to Intel® DL Streamer Pipeline Framework. We also have a running example through the document that will be updated at each step to help show the modifications being described.

Note

The intermediate steps of the pipeline are not meant to run. They are simply there as a reference example of the changes being made in each section.

Contents#

Preparing Your Model#

To use Intel® DL Streamer Pipeline Framework and OpenVINO™ Toolkit the model needs to be in Intermediate Representation (IR) format. To convert your model to this format, please follow model preparation steps.

Configuring Model for Intel® DL Streamer#

NVIDIA DeepStream uses a combination of model configuration files and DeepStream element properties to specify interference actions as well as pre- and post-processing steps before/after running inference as documented here: here.

Similarly, Intel® DL Streamer Pipeline Framework uses GStreamer element properties for inference settings and model proc files for pre- and post-processing steps.

The following table shows how to map commonly used NVIDIA DeepStream configuration properties to Intel® DL Streamer settings.

NVIDIA DeepStream config file |

NVIDIA DeepStream element property |

Intel® DL Streamer model proc file |

Intel® DL Streamer element property |

Description |

|---|---|---|---|---|

model-engine-file <path> |

model-engine-file <path> |

model <path> |

Path to inference model network file. |

|

labelfile-path <path> |

labels-file <path> |

Path to .txt file containing object classes. |

||

network-type <0..3> |

gvadetect for detection, instance segmentation

gvaclassify for classification, semantic segmentation

|

Type of inference operation. |

||

batch-size <N> |

batch-size <N> |

batch-size <N> |

Number of frames batched together for a single inference. |

|

maintain-aspect-ratio |

resize: aspect-ratio |

Number of frames batched together for a single inference. |

||

num-detected-classes |

Number of classes detected by the model, inferred from label file by Intel® DL Streamer. |

|||

interval <N> |

interval <N> |

inference-interval <N+1> |

Inference action executed every Nth frame, please note Intel® DL Streamer value is greater by 1. |

|

threshold |

threshold |

Threshold for detection results. |

GStreamer Pipeline Adjustments#

In the following sections we will be converting DeepStream pipeline to Pipeline Framework. The DeepStream pipeline is taken from one of the examples here. It reads a video stream from the input file, decodes it, runs inference, overlays the inferences on the video, re-encodes and outputs a new .mp4 file.

filesrc location=input_file.mp4 ! decodebin3 ! \

nvstreammux batch-size=1 width=1920 height=1080 ! queue ! \

nvinfer config-file-path=./config.txt ! \

nvvideoconvert ! "video/x-raw(memory:NVMM), format=RGBA" ! \

nvdsosd ! queue ! \

nvvideoconvert ! "video/x-raw, format=I420" ! videoconvert ! avenc_mpeg4 bitrate=8000000 ! qtmux ! filesink location=output_file.mp4

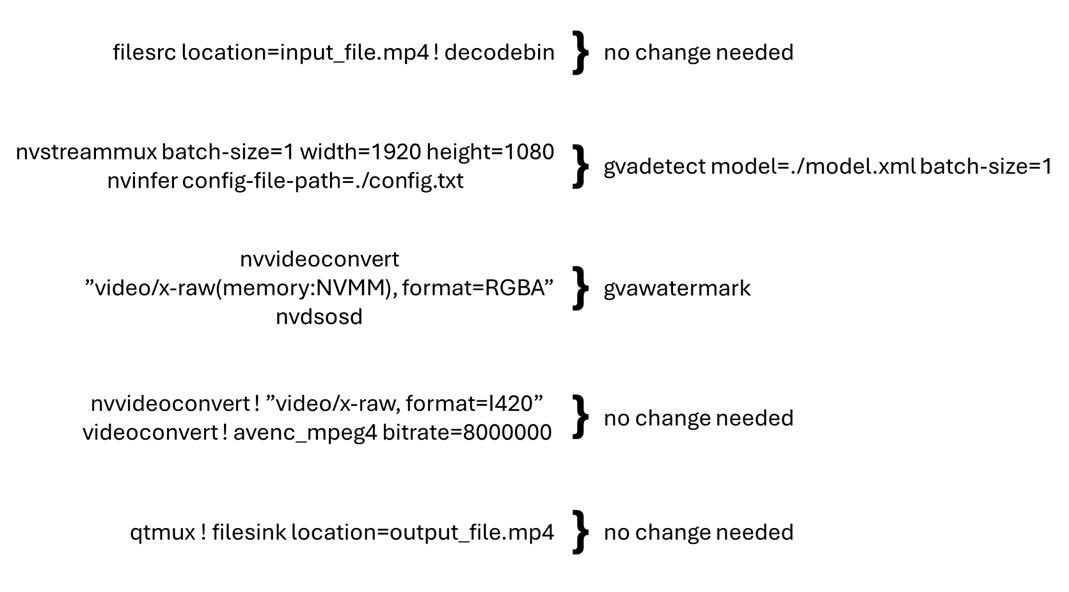

The below mapping represents the typical changes that need to be made to the pipeline to convert it to Intel® DL Streamer Pipeline Framework. The pipeline is broken down into sections based on the elements used in the pipeline.

The next chapters give more details on how to replace each element.

Mux and Demux Elements#

Remove

nvstreammuxandnvstreamdemuxand all their properties.These elements combine multiple input streams into a single batched video stream (NVIDIA-specific). Intel® DL Streamer takes a different approach: it employs generic GStreamer syntax to define parallel streams. The cross-stream batching happens at the inferencing elements by setting the same

model-instance-idproperty.In this example, there is only one video stream so we can skip this for now. See more on how to construct multi-stream pipelines in the following section Multiple Input Streams below.

At this stage we have removed nvstreammux and the queue that

followed it. Notably, the batch-size property is also removed. It

will be added in the next section as a property of the Pipeline Framework

inference elements.

filesrc location=input_file.mp4 ! decodebin3 ! \

nvinfer config-file-path=./config.txt ! \

nvvideoconvert ! "video/x-raw(memory:NVMM), format=RGBA" ! \

nvdsosd ! queue ! \

nvvideoconvert ! "video/x-raw, format=I420" ! videoconvert ! avenc_mpeg4 bitrate=8000000 ! qtmux ! filesink location=output_file.mp4

Inferencing Elements#

Remove

nvinferand replace it withgvainference,gvadetectorgvaclassifydepending on the following use cases:For doing detection on full frames and outputting a region of interest, use gvadetect. This replaces

nvinferwhen it is used in primary mode.Replace

config-file-pathproperty withmodelandmodel-proc.gvadetectgenerates GstVideoRegionOfInterestMeta.

For doing classification on previously detected objects, use gvaclassify. This replaces nvinfer when it is used in secondary mode.

Replace

config-file-pathproperty withmodelandmodel-proc.gvaclassifyrequires GstVideoRegionOfInterestMeta as input.

For doing generic full frame inference, use gvainference. This replaces

nvinferwhen used in primary mode.gvainferencegenerates GstGVATensorMeta.

In this example we will use gvadetect to infer on the full frame and

output region of interests. batch-size was also added for

consistency with what was removed above (the default value is 1 so it is

not needed). We replaced config-file-path property with model

and model-proc properties as described in “Configuring Model for Intel® DL Streamer” above.

filesrc location=input_file.mp4 ! decodebin3 ! \

gvadetect model=./model.xml model-proc=./model_proc.json batch-size=1 ! queue ! \

nvvideoconvert ! "video/x-raw(memory:NVMM), format=RGBA" ! \

nvdsosd ! queue ! \

nvvideoconvert ! "video/x-raw, format=I420" ! videoconvert ! avenc_mpeg4 bitrate=8000000 ! qtmux ! filesink location=output_file.mp4

Video Processing Elements#

Replace NVIDIA-specific video processing elements with native GStreamer elements.

nvvideoconvertwithvapostproc(GPU) orvideoconvert(CPU).If the

nvvideoconvertis being used to convert to/frommemory:NVMMit can just be removed.

nvv4ldecodercan be replaced withva{CODEC}dec, for examplevah264decfor decode only. Alternatively, the native GStreamer elementdecodebin3can be used to automatically choose an available decoder.

Some caps filters that follow an inferencing element may need to be adjusted or removed. Pipeline Framework inferencing elements do not support color space conversion in post-processing. You will need to have a

vapostprocorvideoconvertelement to handle this.

Here we removed a few caps filters and instances of nvvideoconvert

used for conversions from DeepStream’s NVMM because Pipeline Framework uses

standard GStreamer structures and memory types. We will leave the

standard gstreamer element videoconvert to do color space conversion

on CPU, however if available, we suggest using vapostproc to run

on Intel Graphics. Also, we will use the GStreamer standard element

decodebin to choose an appropriate demuxer and decoder depending on

the input stream as well as what is available on the system.

filesrc location=input_file.mp4 ! decodebin3 ! \

gvadetect model=./model.xml model-proc=./model_proc.json batch-size=1 ! queue ! \

nvdsosd ! queue ! \

videoconvert ! avenc_mpeg4 bitrate=8000000 ! qtmux ! filesink location=output_file.mp4

Metadata Elements#

Replace

nvtrackerwith gvatrackRemove

ll-lib-fileproperty. Optionally replace withtracking-typeif you want to specify the algorithm used. By default it will use the ‘short-term’ tracker.Remove all other properties.

Replace

nvdsosdwith gvawatermarkRemove all properties

Replace

nvmsgconvwith gvametaconvertgvametaconvertcan be used to convert metadata from inferencing elements to JSON and to output metadata to the GST_DEBUG log.It has optional properties to configure what information goes into the JSON object including frame data for frames with no detections found, tensor data, the source the inferences came from, and tags, a user defined JSON object that is attached to each output for additional custom data.

Replace

nvmsgbrokerwith gvametapublishgvametapublishcan be used to output the JSON messages generated bygvametaconvertto stdout, file, MQTT or Kafka.

The only metadata processing that is done in this pipeline is to overlay

the inferences on the video for which we use gvawatermark.

filesrc location=input_file.mp4 ! decodebin3 ! \

gvadetect model=./model.xml model-proc=./model_proc.json batch-size=1 ! queue ! \

gvawatermark ! queue ! \

videoconvert ! avenc_mpeg4 bitrate=8000000 ! qtmux ! filesink location=output_file.mp4

Multiple Input Streams#

nvstreammux element,

Pipeline Framework uses existing GStreamer mechanisms to define multiple parallel video processing streams.

This approach allow to reuse native GStreamer elements within the pipeline.

The input stream can share same Inference Engine if they have same model-instance-id property.

This allows creating inference batching across streams.nvstreammux ! nvinfer batch-size=2 config-file-path=./config.txt ! nvstreamdemux \

filesrc ! decode ! mux.sink_0 filesrc ! decode ! mux.sink_1 \

demux.src_0 ! encode ! filesink demux.src_1 ! encode ! filesink

When using Pipeline Framework, the command line defines operations for two parallel streams using native GStreamer syntax.

By setting model-instance-id to the same value, both streams share the same instance gvadetect element.

Hence, the shared inference parameters (model, batch size, …) can be defined only in the first line.

filesrc ! decode ! gvadetect model-instance-id=model1 model=./model.xml batch-size=2 ! encode ! filesink \

filesrc ! decode ! gvadetect model-instance-id=model1 ! encode ! filesink

DeepStream to DLStreamer Elements Mapping Cheetsheet#

Below table lists quick reference for mapping typical DeepStream elements to Intel® DL Streamer elements or GStreamer.

DeepStream Element |

DLStreamer Element |

|---|---|

DeepStream Element |

GStreamer Element |

|---|---|

nvvideoconvert |

videoconvert |

nvv4l2decoder |

decodebin3 |

nvv4l2h264dec |

vah264dec |

nvv4l2h265dec |

vah265dec |

nvv4l2h264enc |

va264enc |

nvv4l2h265enc |

va265enc |

nvv4l2vp8dec |

vavp8dec |

nvv4l2vp9dec |

vavp9dec |

nvv4l2vp8enc |

vavp8enc |

nvv4l2vp9enc |

vavp9enc |